The Lobotomy Pipeline: What Happens When AI Removes All Friction

I use LLMs every day. I’ve been vocal about it. I use them the way a surgeon uses a scalpel — I know the anatomy, I make the cut, the tool doesn’t decide where.

The problem isn’t the tool. The problem is everyone else.

The Exoskeleton Metaphor¶

An exoskeleton amplifies what you already have. Strap one on a soldier and they can carry more, move faster, resist more. Strap one on someone who never trained a day in their life and you get a person who can carry more weight than their skeleton should allow — until the exoskeleton is gone, and they can’t carry anything at all.

That’s what LLMs are: exoskeletons.

They amplify existing knowledge. My writing has friction — I repeat myself, I loop back, I sometimes say the same thing three ways because I’m thinking out loud. I use AI to clean that up. The ideas are mine. The structure is mine. The AI is a copy editor, not a ghostwriter.

But what I see around me is people strapping on the exoskeleton before they’ve ever walked.

The Guy With The SKILL.md¶

A few weeks ago, someone in a dev community shared their SKILL.md file with obvious pride. They had written a detailed guide explaining to an LLM how to perform a git rebase.

Then they posted a follow-up: they had written another one for how to commit.

The responses were enthusiastic. “Great workflow!” “Sharing this!”

Here’s what nobody said: the LLM already knows how to rebase. It knew before you were born. Every git tutorial ever written is in its training data. The problem was never that the LLM didn’t know how to rebase.

The problem is that you don’t know how to rebase.

And instead of learning — instead of running the rebase yourself, making the conflict, resolving it, understanding why --onto exists — you outsourced it. Now you have a SKILL.md file that does rebases you don’t understand, and you feel productive.

You haven’t learned rebasing. You’ve learned to delegate rebasing. Those are not the same skill.

No Friction, No Learning¶

People who never cooked in their life didn’t fail to learn cooking because cooking is hard. They didn’t learn because someone else always did it — mom, a partner, a roommate, delivery apps.

The friction of cooking is the learning. The burned onions. The oversalted pasta. The first time you understand why you deglaze the pan. You don’t get that from watching someone else cook. You don’t get it from having someone describe cooking to you.

You get it from doing it wrong until you do it right.

This is how all knowledge works. It transfers through struggle, not through observation.

LLMs have eliminated the struggle. Not for me — I had the struggle years before LLMs existed. But for anyone starting now? The friction is gone. Every error message gets fixed before they understand what caused it. Every architecture decision gets made before they understand the tradeoffs. Every rebase happens without them ever resolving a conflict manually.

They’re building on a foundation they’ve never felt.

The Influencer Cloning Machine¶

I’ve shipped several projects publicly. Two days after each release, I see the clones appear.

Not forks. Not contributions. Clones — same project, different name, different README, same bones. Sometimes they don’t even rename the internal variables.

The influencers post about them. “I built X.” The comments say “amazing work.” Nobody asks about the implementation. Nobody asks if it’s tested. Nobody asks what happens under edge cases.

I know what happens under edge cases because I hit them. I spent the time. I wrote the tests. I ran it in conditions it wasn’t designed for and fixed the failures.

The clone ships without that. It ships with the happy path and a good README. The users install it. They hit the edge cases I already fixed — three months ago, in my version, quietly. They open issues on the clone. The clone author doesn’t know the answer because they didn’t build it. They ask an LLM. The LLM guesses.

You become the QA for someone else’s half-assembled copy of your work.

This isn’t a new problem. Open source has always had this. But LLMs have made it frictionless. Before, you had to understand enough of a codebase to copy it. You had to read it, adapt it, run it. That friction was a filter. You learned something in the process, even if your intentions were bad.

Now you paste it into a context window and ask for a rename. Zero friction. Zero learning. Perfect confidence.

What Gets Lost¶

The exoskeleton doesn’t just do the work for you. It prevents the work from changing you.

Lifting weights changes your body because the weight resists you. The resistance is the mechanism. If the exoskeleton absorbs the resistance, you never change — you just do more reps without effect.

When an LLM resolves your merge conflict, you skip the moment where you understand why the conflict existed. When it writes your error handling, you skip the moment where you understand what can go wrong. When it designs your schema, you skip the moment where you understand what data actually means in your system.

You ship faster. You understand less. Your confidence increases as your competence decreases. This is not a metaphor — it’s what I watch happening in real time, in public, with people posting about it enthusiastically.

The generation entering engineering now may be the most productive and least capable in history. They can ship more code than any junior developer before them. They understand less of it than any junior developer before them.

That gap will close eventually — in production, at 3am, when the exoskeleton runs out of battery.

The 0day The NSA Doesn’t Have¶

A few weeks ago I found a critical vulnerability in an influencer’s platform. The platform was “suffering from success” — their words, in a recent post about scaling challenges.

My exploit: I typed /admin in the browser.

I was redirected to an admin login page. The login page had a registration form. I registered. No email confirmation required. No invite code. No approval flow. I was immediately logged in as super admin. Full access. I had 7 days to validate my email before getting suspended — which means the platform was thoughtfully going to warn me before removing my super admin privileges.

STUXNET v47. Zero-click. Nation-state level.

My theory on how this happened: the influencer vibe-coded a purple dashboard with an LLM, got the MVP working, then said something like “move the admin login to /admin, we need to build the public-facing part now.”

The LLM moved the route. It had no reason to think about what “open registration on the admin panel” means. It wasn’t asked to think about that. It did exactly what was requested — efficiently, confidently, completely.

The influencer saw the purple dashboard load at /admin. Looked good. Shipped it.

Nobody tested what happened if you clicked “Register” instead of “Login.” Nobody asked the LLM “wait, should anyone be able to register here?” The friction of that thought — the moment where a developer who understood auth would have paused — was gone.

This is not a security post. I’m not publishing CVEs. The point is simpler: the LLM built what was asked. Nobody understood what was built.

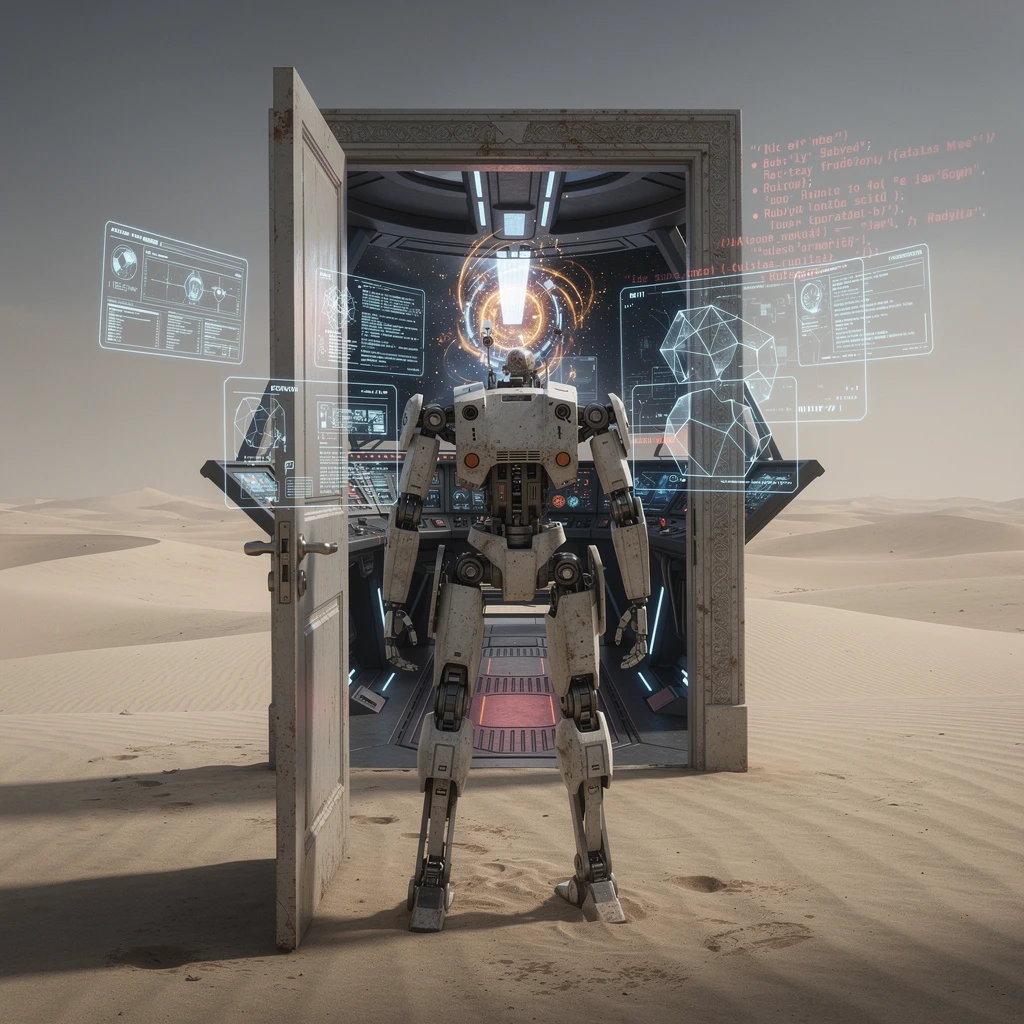

The exoskeleton assembled the door. No lock. No frame. A door standing alone in the desert.

How I Use It¶

I repeat myself when I write. I know this. People who know me know this. I use AI to find those loops and cut them. The ideas survive. The redundancy doesn’t.

I’m also not a native English speaker. I speak five other languages and several dialects. That creates a specific problem: sometimes an idea surfaces in Arabic, or Spanish, or French — and I need to land it in English. Straightforward enough.

Except languages don’t map cleanly onto each other. French, for example, doesn’t have a direct equivalent of “cheaper.” You have to say moins cher — less expensive. I’m a native French speaker. I know this. But after years of moving between languages, the word gets shadowed. I know the concept exists — I’ve expressed it in Arabic, in Spanish — so somewhere in the wiring, French native or not, I hit an undefined error. The reference is there. The surface form isn’t loading.

So I write the sentence in French. The LLM translates it to English and writes “cheaper” — not “less expensive.” Idiomatic, natural, correct. I read it back and the concept gets englishified. Next time, the mapping is there.

That’s an exoskeleton use. I have the muscle. The tool helps me move faster.

What I don’t do is ask it to have the ideas. What I don’t do is ask it to understand the codebase for me. What I don’t do is skip the friction and call the result mine.

The difference matters. Not morally — I don’t care about purity. Practically: the friction I skipped is the knowledge I don’t have. And the knowledge I don’t have will surface eventually, at the worst possible moment, in front of the worst possible audience.

The Uncomfortable Conclusion¶

We’re building a generation of engineers who are very good at prompting and very bad at engineering. They’re not stupid. They’re not lazy. They just never had to be anything else, because the friction was gone before they got here.

The guy with the SKILL.md isn’t an outlier. He’s early. In two years, writing a SKILL.md for operations you don’t understand will be standard practice. It will have a name. There will be courses. The courses will be sold by influencers who cloned the original and never tested the edge cases.

And the people who actually understand what’s happening will be buried under support tickets from users of exoskeletons that nobody inside the building actually built.

I still use LLMs daily. The exoskeleton is useful. But I earned the legs first.

🔗 Interstellar Communications

No transmissions detected yet. Be the first to establish contact!

Related Posts

Hallucination Driven Development: When Senior Engineers Stop Verifying

A CEO tweets about touching 2400 files with a single Cursor prompt. 16 hours runtime. No git diff shown. No verification described. This is Hallucination Driven Development--shipping AI output on faith and calling it engineering.

The Bloat Industry: 30,000 Lines to Count Pageviews

Vibe coders celebrate 30k LOC Rails blogs and 8-container analytics stacks. I built Kaunta — one Go binary, 15MB — because I needed to count pageviews, not run Kafka. The industry of bloat is real, and it's getting louder.

tools/listChanged Is a Bug, Not a Feature: What Claude Code Gets Wrong

I just watched Claude Code ignore the MCP spec in real-time. The server sent tools/listChanged. The client did nothing. I had to manually reconnect. This is not a feature -- it is a bug hiding behind silence.